☑ That's Good Enough

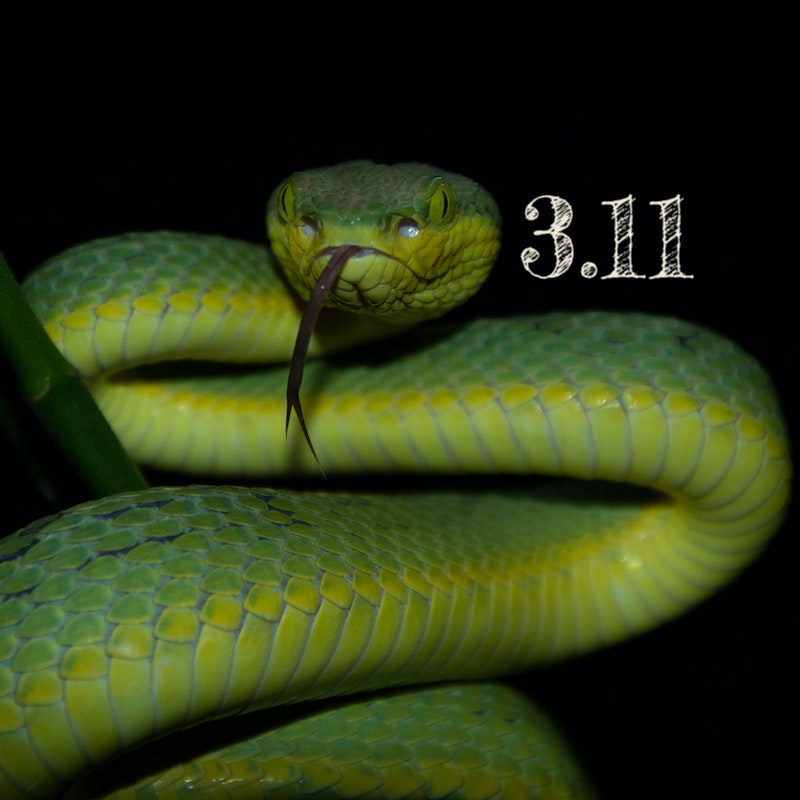

☑ What’s New in Python 3.11 - Performance Improvements

In this series looking at features introduced by every version of Python 3, we take a first look at the new Python 3.11 release, taking a look at some of the major performance improvements that have been introduced.

This is the 24th of the 36 articles that currently make up the “Python 3 Releases” series.

- What’s New in Python 3.0 Sun 24 Jan, 2021

- What’s New in Python 3.1 Mon 1 Feb, 2021

- … 19 more articles…

- What’s New in Python 3.10 - Other New Features Fri 18 Nov, 2022

- What’s New in Python 3.10 - Library Changes Fri 9 Dec, 2022

-

What’s New in Python 3.11 - Performance Improvements Fri 16 Dec, 2022

- What’s New in Python 3.11 - Exception Improvements Sat 31 Dec, 2022

- What’s New in Python 3.11 - Type Hint Improvements Tue 3 Jan, 2023

- … 8 more articles…

- What’s New in Python 3.13 - Library Changes Part 1 Sun 23 Feb, 2025

- What’s New in Python 3.13 - Library Changes Part 2 Wed 12 Mar, 2025

On October 24, 2022 the first stable release of Python 3.11 was made. I’m excited to say that’s less than two months prior to me writing this article, which means that my effort of investigating every Python 3.x release, which turned out to be rather more gargantuan than I anticipated — and more gargantuan even than this sentence — is approaching some sort of stable state. I never thought, way back in January 2021, that I wouldn’t have caught up with the latest release for nearly another two years.

Still, whatever the reason you’re perusing this article I very much doubt it’s to put up with my self-indulgent naval-gazing twaddle, so let’s tear aside the covers and see what treasures we can find.

In this article I’m going to cover what, to me, is one of the most interesting changes in this release: a series of improvements to CPython performance.

Introduction¶

Whatever reasons people have for using Python, blistering performance isn’t generally one of them. It’s not nearly as common to find cases where performance is genuinely critical as it was when I started coding professionally around the turn of the millennium, but nonetheless sometimes every nanosecond counts and in these cases then Python probably isn’t your language of choice.

That said, even if pure runtime performance isn’t the dominant factor in your choice of language, it’s always nice to stretch your hardware a little further by improving your code’s efficiency. There are a collection of such improvements in Python 3.11, and the beautiful thing is that you don’t need to change any of your code to take advantage of them.

These changes are the work of the Faster CPython project, and they claim the changes in Python 3.11 make it around 25% faster on average, or anything from 10-60% depending on specific use-cases.

There are two aspects which have been looked at, reducing startup time and running faster. The relative relevance of them will depend on how you invoke your Python code — as a frequently run script, or a long-running application. I’ll take a quick look at some of the measures used below — if you’re not really interested in performance or the details of how it’s been improved, I would advise that the rest of this article is probably not going to be all that interesting.

Reduced Startup Time¶

Python uses the __pycache__ directory to store pre-compiled versions of modules to reduce startup time — this has been a long-standing feature. However, there is still a startup overhead for these modules, as it requires filesystem access, parsing and heap allocations to support the modules.

In Python 3.11, some of the core modules which are always used by Python have been frozen to remove this overhead. Freezing a module involves pre-compiling it into the Python executable itself, and this was already done with some of the very core import-related code in Python. In 3.11, however, the set of modules has been extended to include things like abc, codecs, io, os, posixpath and site, which are used during the startup process for any code.

If you want to see the full list of modules frozen, you can take a look at Python/frozen.c in the Python source code. This list is generated at build time by the Tools/scrips/freeze_modules.py script (later moved to Tools/build) which you can read through to see the inputs to this process.

Apparently this shaves off 10-15% of the startup time for Python 3.11, which makes a big difference for short-lived scripts like command-line utilities. If, for some reason, faster startup time offends you — or, more likely, you need to do some low-level fiddling which relies on the conventional import process — then you can disable the use of the frozen modules by passing the -X frozen_modules command-line to the Python interpreter.

Streamlined Stack Frames¶

There are also a couple of improvements to improve runtime performance. The first is to streamline the stack frame1 creation process. Some specific enhancements in this area include:

- Moved stack frame contents from heap allocated memory to a contiguous array in memory, which improves cache locality and allows for simpler and faster memory management.

- Make heap allocations lazy, so debugging and memory management information like stack backtraces are only allocated when required.

- Reuse space on the C stack to remove the need for Python stack frames entirely in many cases.

Since one of the larger overheads in Python has always been for function execution, this is a great area to focus on, and performance tests show something like a 3-7% increase in speed from these changes.

One thing to note is that sometimes old-style stack frame objects are still required, for example by debuggers, sys._getframe() or inspect.currentframe() — in these cases, the objects are created on-demand. This means that if you use these diagnostic mechanisms then any performance measurements you make will be less inaccruate — but this shouldn’t be surprising, additional diagnostics typically spoils performance measurement anyway.

Inlined Python Code Function Calls¶

In this release, when the interpreter detects that Python code is calling into a Python function (as opposed to an extension module, etc.) then it now “inlines” that call at the C level, operating within the same C stack frame as the caller. This means that most Python function calls no longer consumer C stack space, which makes things faster — I’d imagine this is partly by avoiding the overhead of extending the stack, but also partly due to improved cache locality.

The benefit here is felt particularly in deeply recursive calls, where a 1.7x speedup was observed. It also would mean recursion could proceed deeper, although the default limit hasn’t been updated. The developer can always increase it with sys.setrecursionlimit(), but it seems to me that if you’re relying on recursion deeper than 1000 levels then you’re probably better off converting it to an iterative approach and maintaining your own stack.

As well as the speedup of this change in itself, this could also enable future optimisations in the eval loop, such as direct access to locals within a caller function, although I couldn’t find note of any such additional optimisations in 3.11.

It’s interesting to see some of the ideas from Stackless seeping back into CPython — first the development on coroutines, which are somewhat similar in concept to the microthreads in Stackless, and now avoiding the use of the C stack. I wonder if we’ll see any other ideas merging back across?

Specialising Adaptive Interpreter¶

The last part of the optimisations I’ll discuss is, to me at least, the most interesting — the implementation of specialisation within the CPython interpreter.

As usual I’ll do my best to explain things fully here, but if you want more in-depth discussion around the process, do check out PEP 659 — it’s well written and explains the general principles very well.

The concept is simple enough: the interpreter looks for cases where generic operations are carried out on the same types repeatedly, and swaps in specialised versions of those operations optimised for the type in question. For example, if a particular addition operation is always carried out on two int types, it’s wasteful to go through checks to see whether the objects project their own __add__() methods and so on — a simple implementation of addition in C saves significant time.

This is an approach that you may be aware of in relation to JIT compilers. CPython is not a JIT compiler, but research has shown that specialisation can accelerate interpreters as well.

The trick here is that the code must still cope with different types — just because a particular line of Python adds two int types a thousand times doesn’t mean that at some future point it’ll be used to concatenate two strings instead. The approach used to cope with this is to specialise aggressively but also adaptively, where the specialised version can be unwound very cheaply in cases where it’s called with different typed arguments.

How this is achieved is by replacing certain bytecode instructions that would benefit from specialisation with “adaptive” versions. These keep track of which types they’re called with, and if they’re called with the same type enough times they specialise themselves to a version that executes more quickly for that type. Hence, the bytecode is no longer immutable, but can update itself to implement specialisation.

As an example, lifted straight from the PEP, the LOAD_ATTR bytecode instruction retrieves the named attribute from the object on top of the stack and replaces that object with the value of the attribute. So under this optimisation, any LOAD_ATTR instructions would initially be replaced with LOAD_ATTR_ADAPTIVE, which tracks how many times it’s been called and initially just indirects back to the original LOAD_ATTR. However, once it’s collected enough information about its use, it jumps into an internal _Py_Specialize_LoadAttr() function which examines the collected type information and, if a suitable specialisation is found, replaces the LOAD_ATTR_ADAPTIVE with the specialised version.

Let’s suppose that particular instruction is always used to load an attribute from a module, then it’ll be replaced with LOAD_ATTR_MODULE. This specialised version should then perform much faster in future calls.

Because the adaptive instruction has been replaced, however, the specialised version must ensure the situation in which it’s called continue to be applicable for its specialisation. As a result, the specialised instructions check their argument types on each call, and if they’re not appropriate they indirect back to the original, which is LOAD_ATTR in this example.

In addition, to cater for cases where a particular use-case changes slowly over the life of the process, the specialised versions also maintain a saturating counter. This is decremented every time the arguments are not consistent with the specialised type, and if it reaches some low threshold, the instruction is de-specialised and reverts back to the adaptive version to begin the process once more.

Specialisation In Action¶

OK, this discussion is all very well, but I like to see these things in action. Fortunately we can do this using two new options to the dis.dis() function for disassembling Python bytecode. Normally the specialised versions of the instructions are hidden from disassembly, but you can pass adaptive=True to show them. Also, I’m going to pass show_caches=True to show CACHE instructions — these aren’t actually instructions per se, but reserved space in the bytecode that’s logically part of the preceding instruction for the adaptive instructions to store their various counters.

We’ll use about the simplest possible function I could think of it for this illustration. To start off, let’s see what the bytecode looks like before specialisation.

>>> import dis

>>> def my_function(arg):

... return arg.my_attr

...

>>> dis.dis(my_function, adaptive=True, show_caches=True)

1 0 RESUME 0

2 2 LOAD_FAST 0 (arg)

4 LOAD_ATTR 0 (my_attr)

6 CACHE 0

8 CACHE 0

10 CACHE 0

12 CACHE 0

14 RETURN_VALUE

Since all the function does is a simple attribute lookup on the argument, you can see the code is extremely simple. Here are the relevant instructions:

RESUME- A no-op which performs internal tracing and debugging checks — this is new in 3.11, but not relevant to the topic of performance improvements.

LOAD_FAST- Pushes a reference to local variable

argonto the stack. LOAD_ATTR- Pops the object on top of the stack, retrieves

my_attrfrom it and pushes the result back on to the stack. RETURN_VALUE- Returns the object on top of the stack to the caller.

We don’t see any specialisation here, since we haven’t used the function yet. Let’s call it a few times in the context of retrieving an attribute from an object instance.

>>> class MyClass:

... def __init__(self):

... self.my_attr = 123

...

>>> x = MyClass()

>>>

>>> for i in range(5):

... _ = my_function(x)

...

>>> dis.dis(my_function, adaptive=True, show_caches=True)

1 0 RESUME 0

2 2 LOAD_FAST 0 (arg)

4 LOAD_ATTR 0 (my_attr)

6 CACHE 0

8 CACHE 0

10 CACHE 0

12 CACHE 0

14 RETURN_VALUE

We’ve called it 5 times with an instance of MyClass, and we see no change to the LOAD_ATTR instruction — it shouldn’t be surprising that we haven’t specialised, as we’re still below the threshold for specialisation, but I’m a little surprised it’s not LOAD_ATTR_ADAPTIVE. I’m assuming this is a consequence of it being the default generated bytecode and hence hasn’t been updated yet.

Another interesting aspect is that all those CACHE values are still the same — this is because the adaptive version of LOAD_ATTR has to store a count of different argument types, so the simple use of counters isn’t sufficient. I assume it has some storage allocated somewhere other than the bytecode for this purpose.

Let’s call it another 5 times and see what happens.

>>> for i in range(5):

... _ = my_function(x)

...

>>> dis.dis(my_function, adaptive=True, show_caches=True)

1 0 RESUME_QUICK 0

2 2 LOAD_FAST 0 (arg)

4 LOAD_ATTR_INSTANCE_VALUE 0 (my_attr)

6 CACHE 0 (counter: 53)

8 CACHE 0 (version: 294)

10 CACHE 0

12 CACHE 0 (index: 0)

14 RETURN_VALUE

Bingo, you can see here that LOAD_ATTR has been replaced with LOAD_ATTR_INSTANCE_VALUE. So the threshold for specialisation in this case is less than ten, which is fairly small — this means that even comparatively short-lived scripts could potentially benefit from these changes.

You’ll also see those CACHE entries have updated — I won’t pretend to be any kind of an expert on these, but I’m fairly confident that the counter: 53 entry is the saturation counter I mentioned earlier. We’ll see this changing in a moment.

For now, we feed it a lot more instances of the same use-case.

>>> for i in range(500):

... _ = my_function(x)

...

>>> dis.dis(my_function, adaptive=True, show_caches=True)

1 0 RESUME_QUICK 0

2 2 LOAD_FAST 0 (arg)

4 LOAD_ATTR_INSTANCE_VALUE 0 (my_attr)

6 CACHE 0 (counter: 53)

8 CACHE 0 (version: 294)

10 CACHE 0

12 CACHE 0 (index: 0)

14 RETURN_VALUE

As you might expect, no changes here — the saturation counter stays at a fixed limit. Just because we call it 500 times in this case isn’t good evidence that we should wait 500 calls with different types to change the specialisation — code execution often follows patterns which shift over time, and weighting things too heavily in favour of past behaviour leads to suboptimal results.

Now we’ll start using my_function() in a different case — a class with __slots__. This requires a different specialisation than LOAD_ATTR_INSTANCE_VALUE, so let’s see how the code reacts.

>>> class MySlotsClass:

... __slots__ = ["my_attr"]

... def __init__(self):

... self.my_attr = 456

...

>>> y = MySlotsClass()

>>> my_function(y)

456

>>> dis.dis(my_function, adaptive=True, show_caches=True)

1 0 RESUME_QUICK 0

2 2 LOAD_FAST 0 (arg)

4 LOAD_ATTR_INSTANCE_VALUE 0 (my_attr)

6 CACHE 0 (counter: 52)

8 CACHE 0 (version: 294)

10 CACHE 0

12 CACHE 0 (index: 0)

14 RETURN_VALUE

After a single invocation we can see that the correct value is being returned, because LOAD_ATTR_INSTANCE_VALUE has detected the use-case is different and fallen back on LOAD_ATTR behind the scenes.

We can also see that the counter has decremented to counter: 52, which is consistent with this being the saturation counter. Since it’s currently just over fifty, let’s call it that many times and check whether it’s changed the specialisation.

>>> for i in range(50):

... _ = my_function(y)

...

>>> dis.dis(my_function, adaptive=True, show_caches=True)

1 0 RESUME_QUICK 0

2 2 LOAD_FAST 0 (arg)

4 LOAD_ATTR_INSTANCE_VALUE 0 (my_attr)

6 CACHE 0 (counter: 2)

8 CACHE 0 (version: 294)

10 CACHE 0

12 CACHE 0 (index: 0)

14 RETURN_VALUE

Great, everything is working as expected — we’ve almost, but not quite, decremented down to zero. So if we invoke three more times, we’d expect the specialisation to revert.

As an interesting aside, although I don’t show it here I did try calling again with the original type a few times — it’s worth noting that the saturation counter only decrements, it won’t increment again no matter how many times the specialisation is correct. So it’s a hard limit on the number of “specialisation misses” that you can have before reverting back to the adaptive version.

>>> for i in range(3):

... _ = my_function(y)

...

>>> dis.dis(my_function, adaptive=True, show_caches=True)

1 0 RESUME_QUICK 0

2 2 LOAD_FAST 0 (arg)

4 LOAD_ATTR_ADAPTIVE 0 (my_attr)

6 CACHE 0 (counter: 485)

8 CACHE 0 (version: 294)

10 CACHE 0

12 CACHE 0 (index: 0)

14 RETURN_VALUE

We can see that’s exactly what’s happened — we’ve reverted back to LOAD_ATTR_ADAPTIVE, where it will be learning a new specialisation to use. It’s interesting to note that it doesn’t revert directly to the new specialisation — this is because the tracking itself is only performed by LOAD_ATTR_ADAPTIVE, which is why the specialisations get away with a single counter (i.e. was it the correct type or not) as opposed to having to actually store the actual types used.

It’s also interesting to note that the counters have updated for LOAD_ATTR_ADAPTIVE — again, I can only assume the initial use of LOAD_ATTR is some special case, but I don’t know if it’s an artifact of the bytecode itself or some behaviour in dis.dis() which hides it until now.

Let’s call it three more times again, twice in a row.

>>> for i in range(3):

... _ = my_function(y)

...

>>> dis.dis(my_function, adaptive=True, show_caches=True)

1 0 RESUME_QUICK 0

2 2 LOAD_FAST 0 (arg)

4 LOAD_ATTR_ADAPTIVE 0 (my_attr)

6 CACHE 0 (counter: 437)

8 CACHE 0 (version: 294)

10 CACHE 0

12 CACHE 0 (index: 0)

14 RETURN_VALUE

>>> for i in range(3):

... _ = my_function(y)

...

>>> dis.dis(my_function, adaptive=True, show_caches=True)

1 0 RESUME_QUICK 0

2 2 LOAD_FAST 0 (arg)

4 LOAD_ATTR_ADAPTIVE 0 (my_attr)

6 CACHE 0 (counter: 389)

8 CACHE 0 (version: 294)

10 CACHE 0

12 CACHE 0 (index: 0)

14 RETURN_VALUE

We can see that the counter decrements each time, but by 16 at a time — I wouldn’t like to speculate on the reason for this, but I’m sure there’s a sensible one.

When this counter hits zero, we expect it to re-assess the specialisation, so as a final experiment let’s hit it that many times.

>>> for i in range(25):

... _ = my_function(y)

...

>>> dis.dis(my_function, adaptive=True, show_caches=True)

1 0 RESUME_QUICK 0

2 2 LOAD_FAST 0 (arg)

4 LOAD_ATTR_SLOT 0 (my_attr)

6 CACHE 0 (counter: 53)

8 CACHE 0 (version: 295)

10 CACHE 0

12 CACHE 0 (index: 8)

14 RETURN_VALUE

We see that it’s now switched over to LOAD_ATTR_SLOT, the specialisation for this new case. The saturation counter is once again at 53, and I’m also interested to note that version has increased to 295 — I’m guessing this somehow tracks how many times the specialisation has changed, but as I mentioned earlier I’m no expect, just making educated guesses about what I can see at this stage.

Phew, that was a little long-winded, but hopefully seeing the specialisation change is interesting and/or useful — I know that it definitely helped me get a more confident understanding of what’s going on under the hood.

Available Specialisations¶

Outside the scope of the PEP is discussing specific specialisations that have been implemented, but the release notes has a list of them so I’ve reproduced it here for convenience.

- Binary operations:

x + x - Binary arithmetic operations for builtin types like

strandfloattake fast paths.

Up to 10% speedup. - Subscripting:

a[i]anda[i] = x - For builtin types the underlying data structure is directly indexed. Custom

__getitem__()methods are inlined.

Up to 10-20% speedup. - Function calls:

f(arg) - Calls to builtin C functions for builtin types (e.g. calling

len()onstr) directly call into underlying C-code instead of following the usual calling convention.

Up to 20% speedup. - Load global variable:

print(...) - The index in the globals namespace is cached, so no namespace lookup is required.

Similar optimisation already in 3.8 for some cases, but expanded in 3.11. - Attribute access:

obj.attr - Similar to globals, the index is cached.

Similar optimisation already in 3.10 for some cases, but expanded in 3.11. - Method access:

obj.method() - Address of the method function is cached, requiring no namespace lookups, even for long inheritance chains.

Up to 10-20% speedup. - Unpack sequence:

*sequence - Specialised for common containers like

listandtuple, bypassing calling convention.

Up to 8% speedup.

Conclusion¶

This article has been a slightly odd one to start my look at Python 3.11, but as soon as I read the headline savings of 25% of speed, I was immediately interested to discover how it was achieved. I’m impressed by how much work has gone into these changes, and moreover at their effectiveness. I’m sure I’m far from the only developer who’s spent hours implementing some complicated optimisation or other that we’re certain will double performance, only to find it barely shaves a couple of percent off.

The other impressive thing is how they’ve managed to be implemented in such a way that developers don’t need to make any changes to take advantage of them — except for running their code under Python 3.11, of course.

Anyway, that’s it for this article. Next time I’ll go through some of the more conventional langauge changes in this release.

-

A stack frame is a slice of the stack which contains the context for a particular function — this includes function arguments, local variables and the return address for when execution is complete. ↩